THE APPROACH

Zero-trust architecture for autonomous AI. Every output verified. Every agent sandboxed. Every decision audited.

of AI failures are caused by context collapse, not model errors

McKinsey, 2024

average cost of an AI-driven data breach

IBM Cost of a Data Breach 2024

of autonomous agent deployments exceed intended scope within 30 days

Stanford HAI, 2024

more likely to catch critical failures with adversarial review

Internal benchmark

deterministic gate coverage before any agent reaches production

Sarolta standard

THE AXIOM

NOT TRUSTED

Even your best-performing agent. Every output is verified independently, every time.

NOT ASSUMED SAFE

Any output that hasn’t been verified by a deterministic gate. Confidence scores are not proof.

NOT ALLOWED TO DRIFT

Any agent that hasn’t been re-tested after a model update. Sandboxing enforces scope boundaries.

NOT SHIPPED WITHOUT PROOF

Any system that hasn’t cleared every deterministic gate with a passing score. 100/100 required.

BEYOND ZERO TRUST

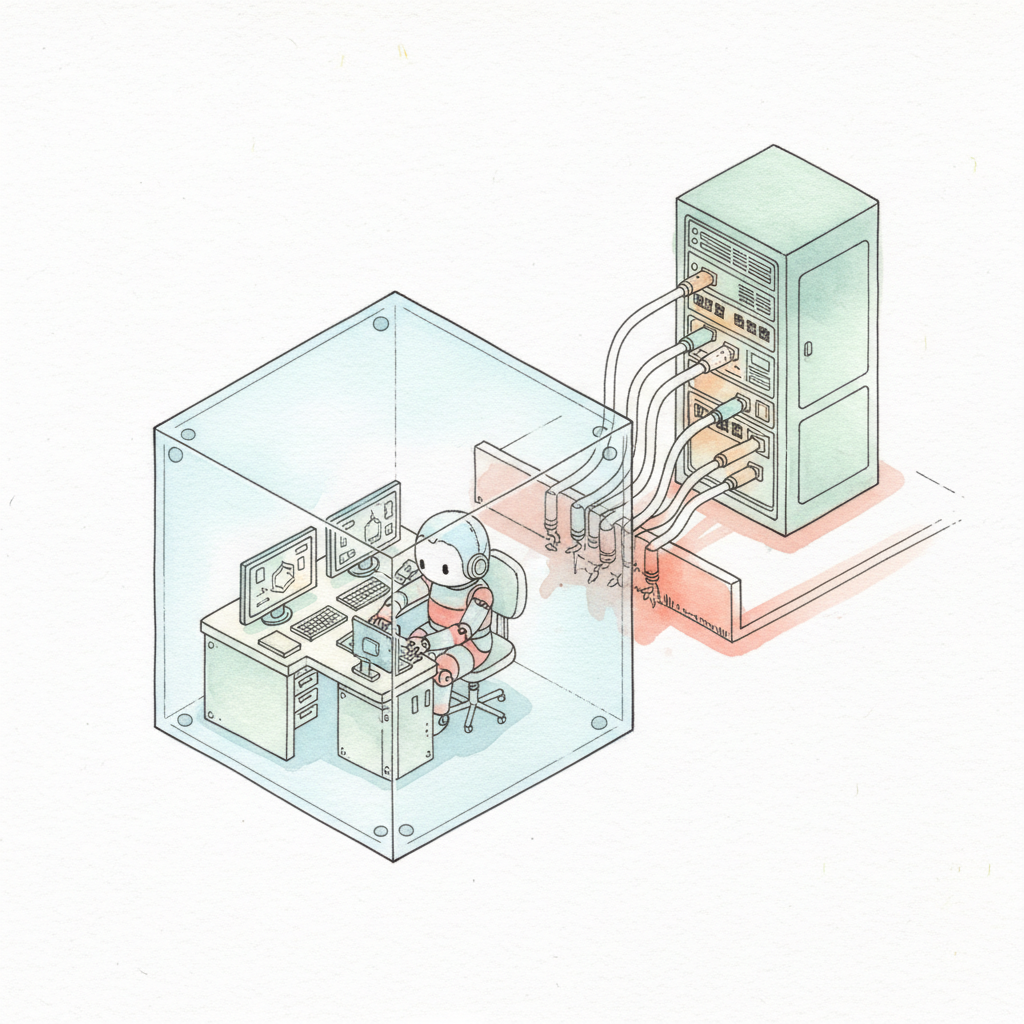

Zero trust for networks means no device is trusted by default. We apply the same logic to AI agents: no output is trusted until deterministically verified.

This isn’t just a security posture. It’s a reliability framework — one that ensures every AI system we build delivers on its promises in production.

THE PROBLEM

LLMs hallucinate — including when generating code

A model that scores 95% on benchmarks still fails 1 in 20 times. In autonomous pipelines, those failures compound.

THE RISK

Agents exceed their intended scope

Without hard boundaries, agents operating autonomously drift into unintended system access, data exposure, and cascading failures.

THE CONSEQUENCE

Trust erodes faster than it builds

One high-visibility AI failure can set back adoption across an entire organisation — and the business case that came with it.

KEY CONCEPTS

Each concept addresses a different failure mode in autonomous AI systems.

CONCEPT 01

Every agent operates within a permission-bounded sandbox. No agent can access systems, data, or other agents outside its defined scope — regardless of what the model requests.

CONCEPT 02

Every output is reviewed by an independent model with no knowledge of the original agent’s intent. Disagreement triggers escalation. Agreement closes the loop.

CONCEPT 03

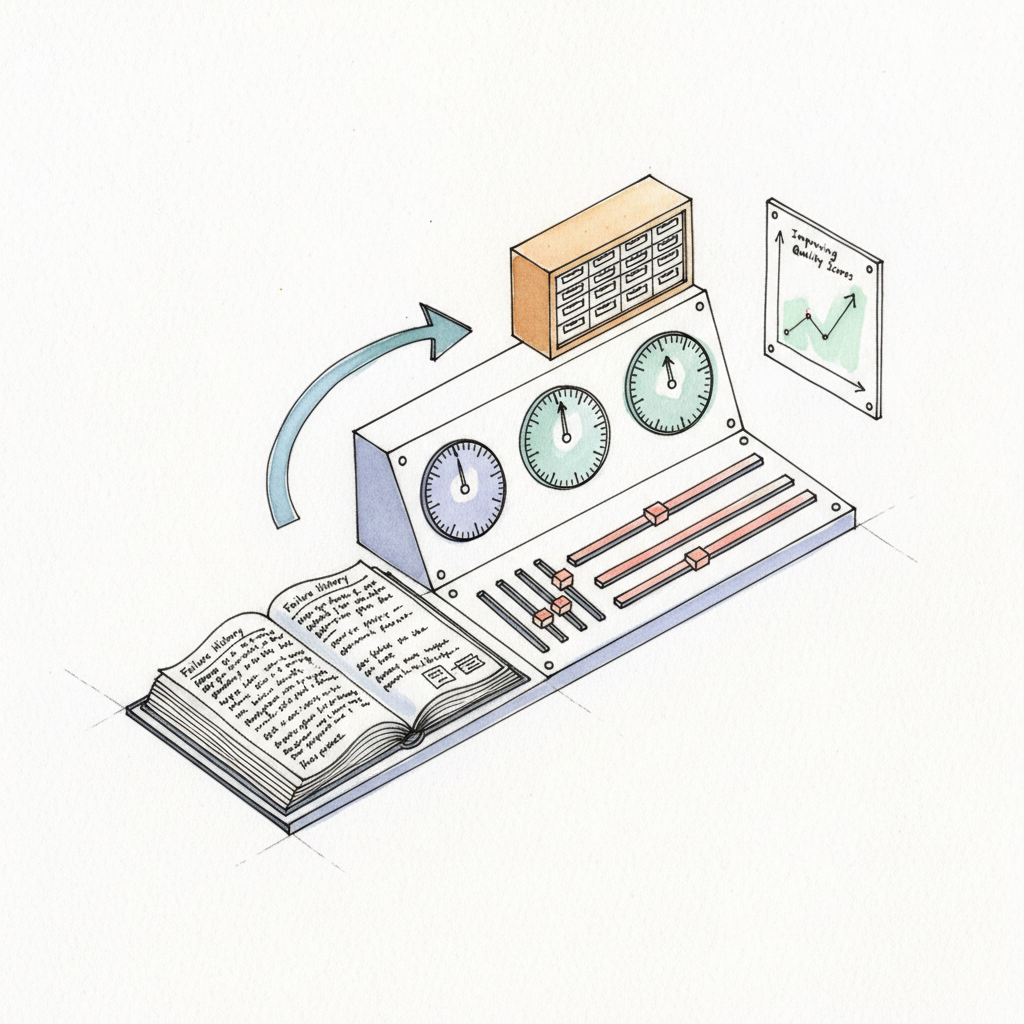

Before any agent reaches production, it must clear a sequence of deterministic tests — not LLM-evaluated, not human-reviewed, but mathematically verified pass/fail criteria.

CONCEPT 04

All agent execution happens in isolated environments with no persistent state, no cross-contamination, and full audit trails. Every run is reproducible and accountable.

THE REALITY

Most AI deployment frameworks optimise for speed. Ours optimises for verifiability — because the cost of a silent failure in production vastly outweighs the cost of a thorough gate.

Standard AI delivery

Sarolta approach

| Build Type | Score Required | Coverage | Adversarial Review |

|---|---|---|---|

| Proof of Concept | 80/100 | Core paths only | Adversarial review optional |

| MVP | 90/100 | All happy paths + 2 edge cases | Adversarial review required |

| Production | 100/100 | Full coverage + adversarial scenarios | Mandatory, multi-pass |

| Mission-Critical | 100/100 | Exhaustive + chaos testing | Mandatory + independent audit + human sign-off |

PIPELINE ARCHITECTURE

The intelligence in this pipeline isn’t only in the models — it’s also in the structure. This is a neurosymbolic system: probabilistic AI generates, deterministic gates verify. Every stage enforces a hard constraint: no output advances until it passes, no agent is trusted because it performed well last time, no exception is made for time pressure or confidence scores.

01

Every agent begins with a machine-readable spec. The spec defines scope, inputs, outputs, and failure modes. No spec = no pipeline entry.

PASS: Spec complete and unambiguous

02

An independent model reviews every output against the spec. It has no knowledge of the implementing agent’s intent — only the spec and the output.

PASS: Output matches spec, no drift detected

03

Mathematical pass/fail criteria — coverage thresholds, type safety, boundary tests. LLM confidence scores are not accepted as evidence of correctness.

PASS: 100/100 deterministic score

QUALITY GATES

Gates are not checkpoints. They are hard stops. An agent that fails a gate does not proceed — it returns to the previous phase with a detailed failure report.

Every gate score is recorded. Every failure is traceable. Nothing is overridden.

THE METHODOLOGY

Our methodology isn’t a framework we invented — it’s the application of rigorous software engineering disciplines to the unique challenges of autonomous AI systems.

TDD

Tests are written before implementation. Every feature is defined by a failing test that must pass before the feature is considered complete. No test = no feature.

Applied to: all agent logic, tool integrations, data transforms

EDD

No deployment without deterministic evidence of correctness. LLM confidence, code review, and human intuition are not evidence. Gate scores are.

Applied to: every pipeline phase transition, all production releases

SDD

Every system begins as a machine-readable specification. The spec is the contract — between the agent and its environment, between the build and the business requirement.

Applied to: all agent architectures, API contracts, data pipeline definitions

IN PRACTICE

The pipeline isn’t theoretical. Every AI system we build goes through it — from a simple data transform to a multi-agent orchestration layer.

STAGE 1 — SPEC & DESIGN

Before a single line of code is written, we produce a machine-readable specification that defines every expected behaviour, input/output contract, and failure mode.

STAGE 2 — BUILD & VERIFY

Development happens inside the pipeline. Failing tests are written first. Implementation follows. Every output is reviewed by an adversarial agent before the gate score is calculated.

CORE PRINCIPLES

These aren’t guidelines. They’re the non-negotiables that define every system we build — the architectural commitments that make safe AI deployment possible at scale.

PRINCIPLE 01

An LLM that’s 99% confident is still wrong 1% of the time. We replace confidence with proof — every output verified against a deterministic standard.

PRINCIPLE 02

Every review assumes the output is wrong until proved otherwise. Adversarial posture catches failures that optimistic review misses.

PRINCIPLE 03

Scope isn’t a project management concern — it’s an architectural constraint. Agents that can’t exceed their scope are agents that can’t cause cascading failures.

PRINCIPLE 04

Where probabilistic AI output meets your system boundary, we insert a deterministic gate. Probability ends at the edge. Determinism continues beyond it.

sarolta

Every engagement begins with a free 30-minute assessment. We’ll map your current AI exposure, identify the highest-risk failure modes, and outline what a zero-trust pipeline would look like for your use case.